AI Calling: How to Kickoff a Career in Data Science

Paul Mahler remembers the day in May 2013 he decided to make the switch.

The former economist was waiting at a bus stop in Washington, D.C., reading the New York Times on his smartphone. He was struck by the story of a statistics professor who wrote an app that let computers review screenplays. It launched the academic into a lucrative new career in Hollywood.

“That seemed like a monumental breakthrough. I decided I wanted to get into data science, too,” said Mahler. Today, he’s a senior data scientist in Silicon Valley, helping NVIDIA’s customers use AI to make their own advances.

Like Mahler, Eyal Toledano made a big left turn a decade into his career. He describes “an existential crisis … I thought if I have any talent, I should try to do something I’m really proud of that’s bigger than myself and even if I fail, I will love every minute,” he said.

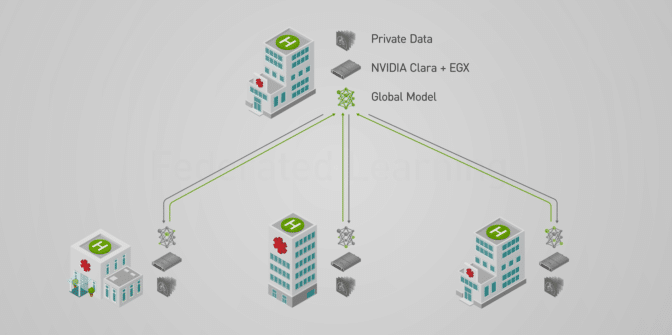

Then “an old friend from my undergrad days told me about his diving accident in a remote area and how no one could read his X-rays. He said we should build a database of images [using AI] to facilitate diagnoses in situations where people need this help — it was the first time I devoted myself to a seed of an idea that came from someone else,” Toledano recalled.

The two friends co-founded Zebra Medical Vision in 2014 to apply AI to medical imaging. For Toledano, there was only one way into the emerging field of deep learning.

“Roll up your sleeves, shovel some dirt and join the effort, that’s what helped me — in data science, you really need to get dirty,” he said.

Plenty of Room in the Sandbox

The field is still wide open. Data scientist tops the list of best jobs in America, according to a 2019 ranking from Glassdoor, a service that connects 67 million monthly visitors with 12 million job postings. It pegged median base salary for an entry-level data scientist at $108,000, job satisfaction at 4.3 out of 5 and said there are 6,510 job openings.

The job of data engineer was not far behind at $100,000, 4.2 out of 5 and 4,524 openings.

A 2018 study by recruiters at Burtch Works adds detail to the picture. It estimated starting salaries range from $95,000 to $168,000, depending on skill level. Data scientists come to the job with a wide range of academic backgrounds including math/statistics (25%), computer science and physical science (20% each), engineering (18%) and general business (8%). Nearly half had Ph.D.s and 40 percent held master’s degrees.

“Now that data is the new oil, data science is one of the most important jobs,” said Alen Capalik, co-founder and chief executive of startup FASTDATA.io, a developer of GPU software backed in part by NVIDIA. “Demand is incredible, so the unemployment in data science is zero.”

Like Mahler and Toledano, Capalik jumped in head first. “I just read a lot to understand data, the data pipeline and how customers use their data — different verticals use data differently,” he said.

The Nuts and Bolts

Data scientists are hybrid creatures. Some are statisticians who learned to code. Some are Python wizards learning the nuances of data analytics and machine learning. Others are domain experts who wanted to be part of the next big thing in computing.

All face a common flow of tasks. They must:

- Identify business problems suited for big data

- Set up and maintain tool chains

- Gather large, relevant datasets

- Structure datasets to address business concerns

- Select an appropriate AI model family

- Optimize model hyperparameters

- Postprocess machine learning models

- Critically analyze the results

“The unicorn data scientists do it all, from setting up a server to presenting to the board,” said Mahler.

But the reality is the field is quickly segmenting into subtasks. Data engineers work on the frontend of the process, massaging datasets through the so-called extract, transform and load process.

Big operations may employ data librarians, privacy experts and AI pipeline engineers who ensure systems deliver time-sensitive recommendations fast.

“The proliferation of titles is another sign the field is maturing,” said Mahler.

Play a Game, Learn the Job

One of the fastest, most popular ways into the field is to have some fun with AI by entering Kaggle contests, said Mahler. The online matches provide forums with real-world problems and code examples to get started. “People on our NVIDIA RAPIDS product team are continually on Kaggle contests,” he said.

Wins can lead to jobs, too. Owkin, an NVIDIA partner that designs AI software for healthcare, declares on its website, “Our data scientists are among the best in the world, with several Kaggle Masters.”

These days, at least some formal study is recommended. Online courses from fast.ai aim to give experienced programmers a jumpstart into deep learning. Co-founder Rachel Thomas maintains a list of her talks encouraging everyone, especially women, to get into data science.

We compiled our own list of online courses in data science given by the likes of MIT, Google and NVIDIA’s Deep Learning Institute. Here are some other great resources:

- A 2017 ranking of top courses by KD Nuggets, a news site for data scientists

- Classes on edX, an online service co-founded by MIT and Harvard

- Classes on Coursera, founded by two Stanford professors

- Andrew Ng’s machine learning class on Coursera (More than 7 million views)

- Classes at Udacity, founded by two other Stanford instructors

“Having a strong grasp of linear algebra, probability and statistical modeling is important for creating and interpreting AI models,” said Mahler. “A lot of employers require a degree in data or computer science and a strong understanding of Python,” he added.

“I was never one to look for degrees,” countered Capalik of FASTDATA.io. “Having real-world experience is better because the first day on a job you will find out things people never showed you in school,” he said.

Both agreed the best data scientists have a strong creative streak. And employers covet data scientists who are imaginative problem solvers.

Getting Picked for a Job

One startup gives job candidates a test of technical skills, but the test is just part of the screening process, said Capalik.

“I like to just look someone in the eye and ask a few questions,” he said. “You want to know if they are a problem solver and can work with a team because data science is a team effort — even Michael Jordan needed a team to win,” he said.

To pass the test and get an interview with Capalik, “you need to know what the data pipeline looks like, how data is collected, where it’s stored and how to work around the nuances and inefficiencies to solve problems with algorithms,” he said.

Toledano of Zebra is suspicious of candidates with pat answers.

“This is an experimental science,” he said. “The results are asymptotic to your ability to run many experiments, so you need to come up with different pieces and ideas quickly and test them in training experiments over and over again,” he said.

“People who want to solve a problem once might be very intelligent, but they will probably miss things. Don’t build a bow and arrow, build a catapult to throw a gazillion arrows — each one a potential solution you can evaluate quickly,” he added

Chris Rowen, a veteran entrepreneur and chief executive of AI startup BabbleLabs, is impressed by candidates who can explain their work. “Understand the theory about why models work on which problems and why,” he advised.

The Developer’s Path

Unlike the pure digital world of IT where answers are right or wrong, data science challenges often have no definitive answer, so they invite the curious who like to explore options and tradeoffs.

Indeed, IT and data science are radically different worlds.

IT departments use carefully structured processes to check code in and out and verify compliance. They write apps once that may be used for years. Data science teams, on the other hand, conduct experiments continuously with models based on probability curves and frequently massage models and datasets.

“Software engineering is more of a straight line while data science is a loop,” said James Kobielus, a veteran market watcher and lead AI analyst at Wikibon.

That said, it’s also true that “data science is the core of the next-generation developer, really,” Kobielus said. Although many subject matter experts jump into data science and learn how to code, “even more people are coming in from general app development,” he said, in part because that’s where the money is these days.

Clouds, Robots and Soft Skills

Whatever path you take, data scientists need to be familiar with the cloud. Many AI projects are born on remote servers using containers and modern orchestration techniques.

And you should understand the latest mobile and edge hardware and its constraints.

“There’s a lot of work going on in robotics using trial-and-error algorithms for reinforcement learning. This is beyond traditional data science, so personnel shortages are more acute there — and computer vision in cameras could not be hotter,” Kobielus said.

A diplomat’s skills for negotiation comes in handy, too. Data scientists are often agents of change, disrupting jobs and processes, so it’s important to make allies.

A Philosophical Shift

It sounds like a lot of work, but don’t be intimidated.

“I don’t know that I’ve made such a huge shift,” said Rowen of BabbleLabs, his first startup to leverage data science.

“The nomenclature has changed. The idea that the problem’s specs are buried in the data is a philosophical shift, but at the root of it, I’m doing something analogous to what I’ve done in much of my career,” he said

In the past Rowen explored the “computational profile of a problem and found the processor to make it work. Now, we turn that upside down. We look at what’s at the heart of a computation and what data we need to do it — that insight carried me into deep learning,” he said.

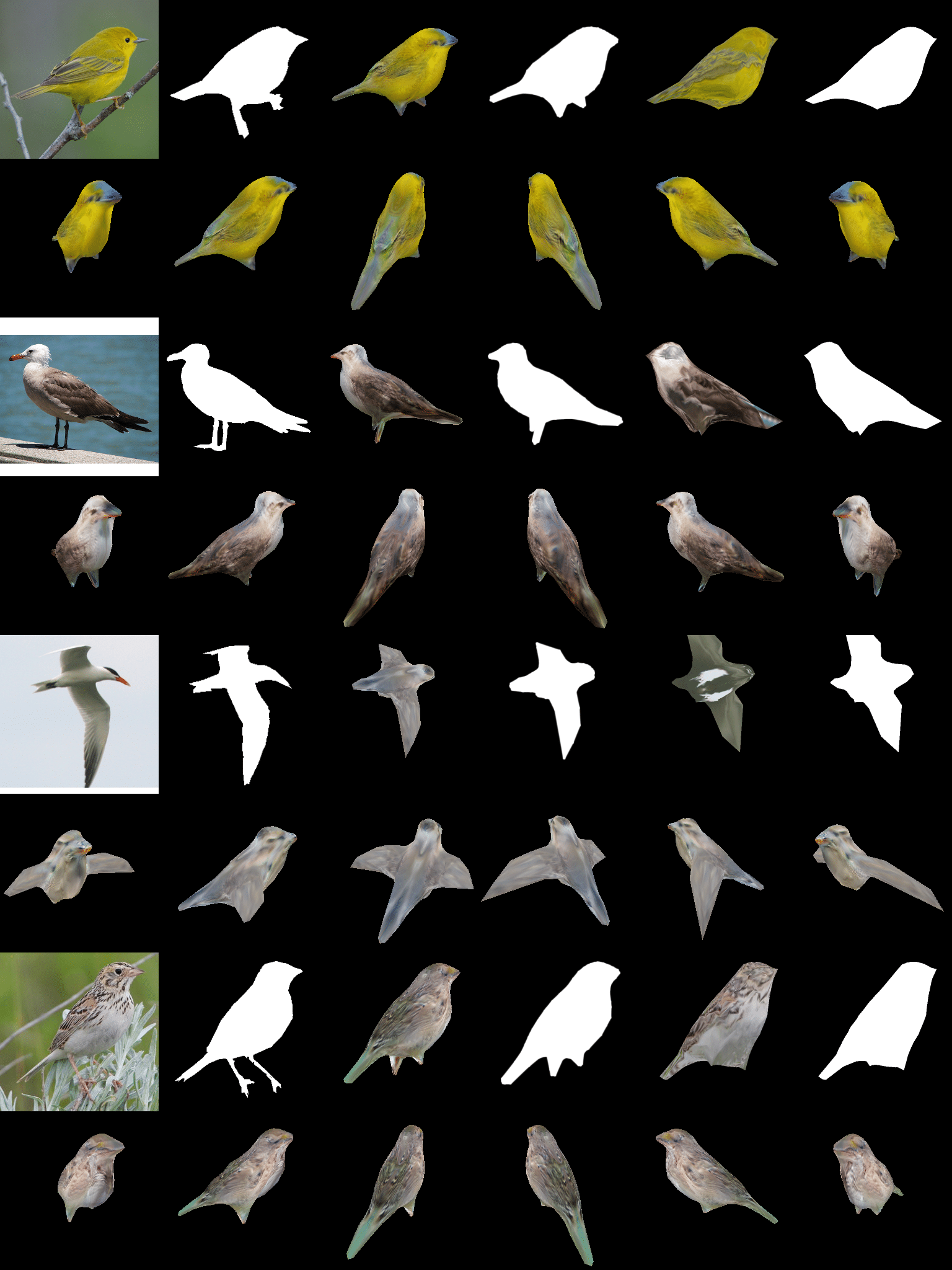

In a May 2018 talk, fast.ai co-founder Thomas was equally encouraging. Using transfer learning, you can do excellent AI work by training just the last few layers of a neural network, she said. And you don’t always need big data. For example, one system was trained to recognize images of baseball vs. cricket using just 30 pictures.

“The world needs more people in AI, and the barriers are lower than you thought,” she added.

The post AI Calling: How to Kickoff a Career in Data Science appeared first on The Official NVIDIA Blog.