[P] Learning Digital Circuits: A Journey Through Weight Invariant Self-Pruning Neural Networks

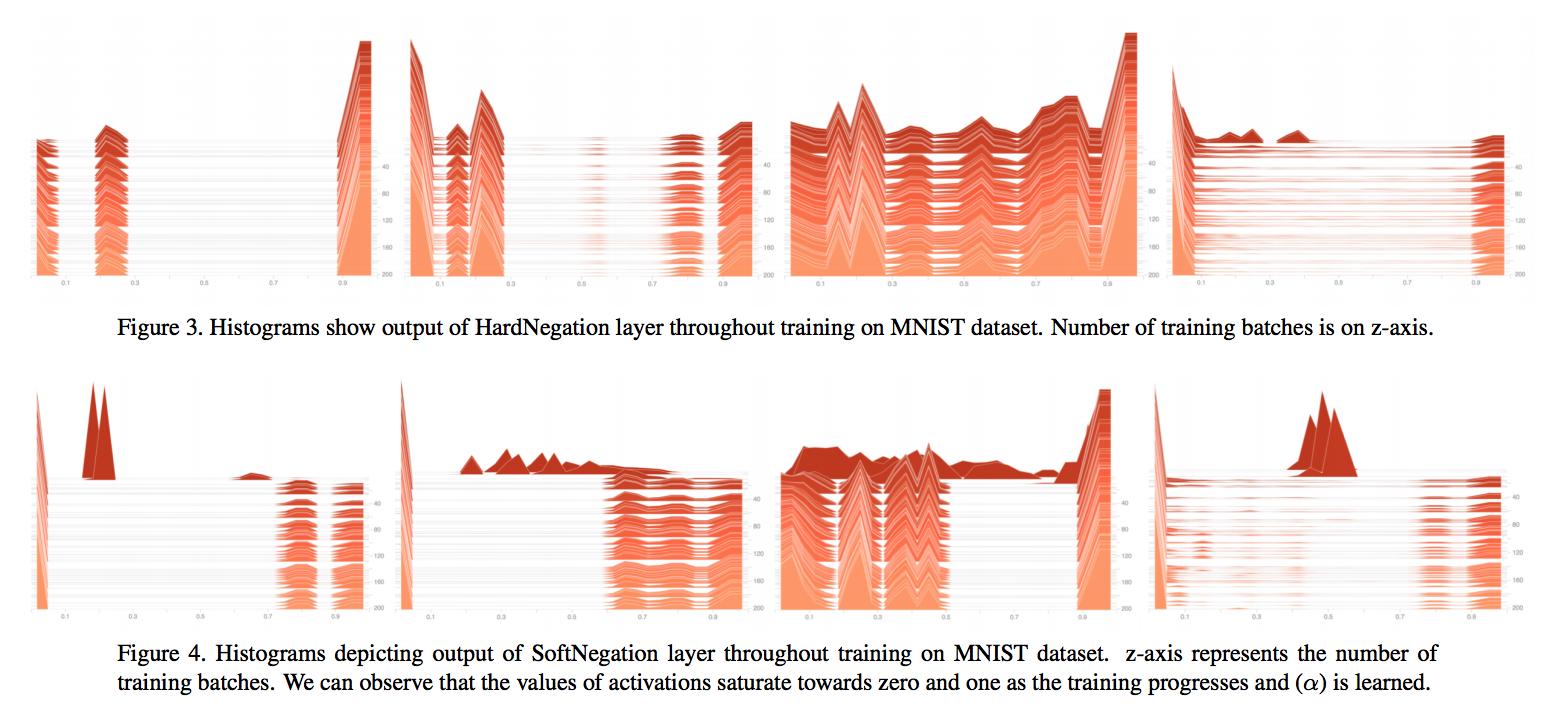

TLDR: – Learns weight agnostic topologies which mimic digital circuits using gradient descent. – Sparse nets with weights restricted to 0 or 1 can be learned by introducing Bernoulli initialization to BinaryConnect framework. – In binarized net, neuron + activation act like OR gates. Network purely composed with OR gates fails to solve the complex problem. BatchNorm acts like a NOT gate allowing the network to learn more complicated functions by forming NOR gates. – Learning topologies don’t necessarily need signed weights (Refer SuperMasks: https://arxiv.org/abs/1905.01067).

Paper: https://arxiv.org/abs/1909.00052

Code: https://github.com/AgrawalAmey/learning-digital-net

Colab: https://colab.research.google.com/github/AgrawalAmey/learning-digital-net/

submitted by /u/agrawalamey

[link] [comments]